Working efficiently in Langdock means using your AI resources wisely. Whether you’re chatting with models, building agents, running workflows, or integrating with your existing tools, a few simple habits can help you stay well within your usage limits while getting better results. And don’t worry: Langdock will never fully cut you off. Even if you hit your usage limits, you’ll always have a base model available as a fallback so you can continue using Langdock.Documentation Index

Fetch the complete documentation index at: https://docs.langdock.com/llms.txt

Use this file to discover all available pages before exploring further.

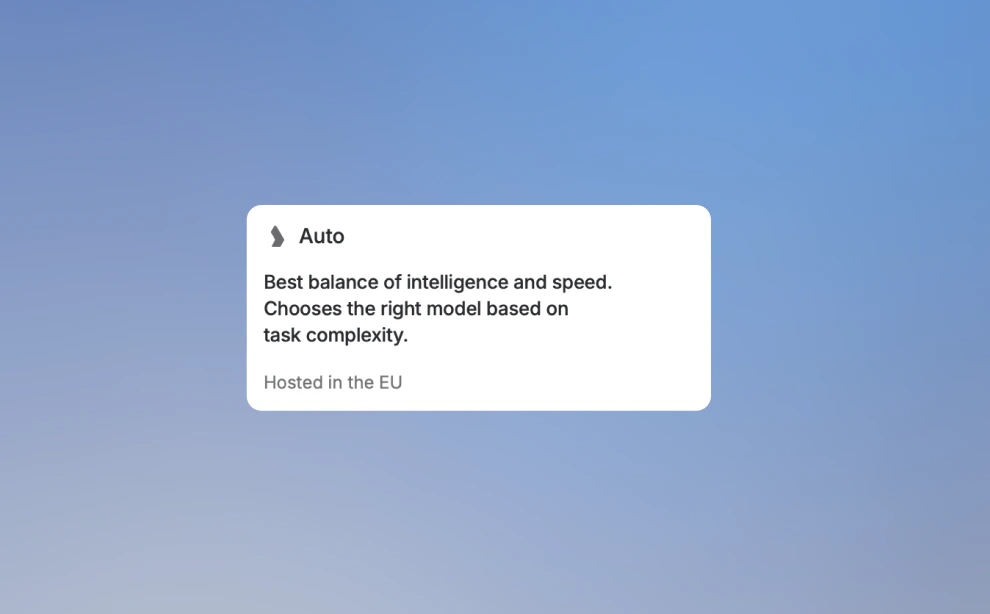

Not sure which model to pick?

Select Auto mode from the model selector and let Langdock choose for you. Auto analyzes your first message to understand the request and estimate its complexity, then picks a suitable model for the conversation. It is designed for everyday work: quick questions, drafting, summarizing, rewriting, and analysis. For tasks that require maximum reasoning depth, you can still switch to a specific model at any point.

General best practices

The model picker shows relative usage compared to GPT-5.2, rounded to one decimal. GPT-5.2 is 1.0x. Use lighter models for routine work and stronger models for complex analysis, planning, or creative work. See our model guide for the full recommendation.| Usage band | Model | Relative usage |

|---|---|---|

| Light | GPT-5.4 Mini | 0.3x |

| Efficient default | Haiku 4.5 | 0.8x |

| Balanced | GPT-5.2 | 1.0x |

| Step up | GPT-5.4 | 1.5x |

| Strong generalist | Sonnet 4.6 | 3.1x |

| Frontier reasoning | Opus 4.7 | 8.0x |

| Rare top runs | GPT-5.2 Pro | 24.0x |

- Provide clear instructions, include relevant context, and specify the format you’d like. Be careful with large files. The bigger the document, the more of your usage each message consumes. When possible, reference specific sections rather than entire documents.

- Before starting a conversation, take a moment to think about what you actually need. Can you combine related questions into a single message? Which information does the model need to understand your request right away?

- Take a quick look at your message before hitting send. Is your message clear and does it contain all necessary information? A few seconds of review can prevent unnecessary follow-up messages and wasted usage.

Chat

Conversations accumulate cost as they grow. A few habits keep things efficient:- Start a new conversation when you move to a new topic. The model processes the full history on every message, so long conversations get expensive fast.

- You are not happy with the response or made a mistake? Use the edit icon to refine your original prompt rather than writing a new message from scratch. This keeps your conversation concise.

- Save prompts that work well to your prompt library using the ”+” icon. Reusing a well-crafted prompt is almost always better than starting from scratch.

- Constrain the length of the output by adding a simple instruction to your prompt like: “Max 5 Bullet Points”, or “Use at most 50 words”. This forces the model to prioritize what matters most, saving tokens and usually producing a sharper, more useful answer.

Agents

A well-built agent saves your team from explaining the same context over and over. A few things that make a real difference:- Create more specific agents. Configure specific system instructions, use only relevant documents, and define the constraints to produce accurate results.

- If your most-used agents are running users into limits, consider switching to a more resource-efficient model and consider refining the prompt.

- If you’re repeatedly setting up the same context in Chat, that’s a sign you should create a dedicated agent instead. Agents retain their configuration across conversations.

- When you build an agent that works well, share it. This prevents multiple team members from each spending their own usage to set up equivalent configurations.

Workflows

Workflow usage is billed separately per run and does not count towards your personal usage limits. Admins can set controls and cost limits.

- Design efficient workflows by minimizing unnecessary steps and combining operations instead of chaining many small ones.

- Add conditions early in your workflow to skip branches that don’t apply, rather than processing everything and filtering at the end.

- Not every step in a workflow needs a frontier model. Use more resource efficient models for simple extraction or formatting steps.

- When building workflows, fill the node parameters manually where you can. Auto Mode and Prompt AI are convenient, but they add cost to every run.

- If your workflow is triggered by events (form submissions, schedules, integration triggers), make sure the trigger conditions are specific enough to avoid unnecessary runs. A workflow that fires on every message when it only needs to fire on specific keywords is wasting runs.